Power Up Your VMware to XenServer Migration with Conversion Manager 8.5.0

One of the most common questions that pops up as part of planning a migration from VMware vSphere to XenServer is if the VMs should be migrated or rebuilt on the new platform . For the cases where the answer is migration, there is a free tool available as part of the XenServer offering. Conversion…

Spotlight

Power Up Your VMware to XenServer Migration with Conversion Manager 8.5.0

One of the most common questions that pops up as part of planning a migration from VMware vSphere to XenServer is if the VMs should be migrated or rebuilt on the new platform . For the cases where the answer is migration, there is a free tool available as part of the XenServer offering. Conversion…

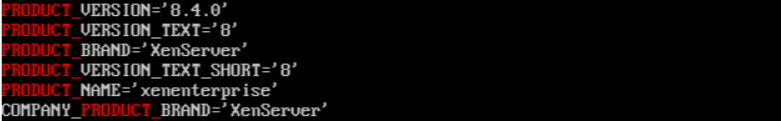

XenServer 8 is really XenServer 8.4

Here’s something you might not know, XenServer 8 is actually version XenServer 8.4 under the hood, which has been the case ever since its release last year. We recognise that labelling the release as XenServer 8 may have led to a degree of confusion, with some customers even under the impression that our prior Citrix…